Pubblicato inSenza categoria

ID3 algorithm – Implementation in C

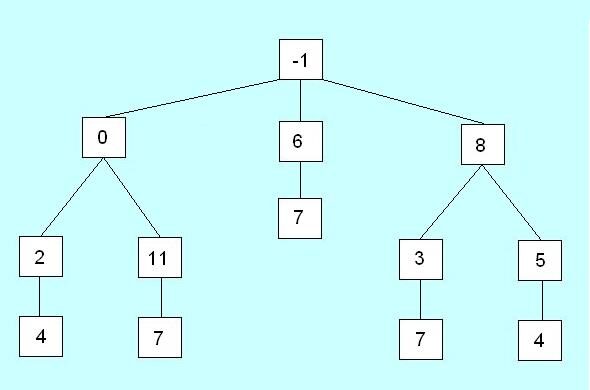

This project is a C implementation of brilliant algorithm invented by Ross Quinlan. This source generates a decision tree from a dataset and extract rules from a categorized dataset. DAY OUTLOOK TEMPERATURE HUMIDITY WIND PLAY BALL D1 Sunny Hot High…